Schedulers Beat Agents

7 stories · ~7 min read

If You Only Read One Thing

The new scarcity is not model intelligence; it is scheduling. Cursor's Build in Parallel makes agent work a coordination problem, while Gemini 3.1 Flash-Lite makes model choice a routing problem. Read Cursor's May 7 changelog because it shows the IDE turning into an operations console, not a better autocomplete box.

Cursor Adds The Scheduler

Cursor's new release is not really about doing more work in parallel. It is about making parallel work reviewable enough to survive.

The Cursor 3.3 changelog adds a PR review surface, "Build in Parallel" for plans, and a quick action that splits changes into separate PRs. The prior version of this workflow was a human running several agents in worktrees, then manually remembering which branch did what. Cursor is now productizing the missing middle: identify independent tasks, keep dependent steps ordered, propose a split plan, create a backup snapshot, and turn the result into reviewable PRs.

That matters because parallel agents are a distributed-systems problem hiding inside a developer tool. A single coding agent fails by making a bad edit. Several agents fail by duplicating work, touching the same file, blocking on the same local service, or handing a human four plausible diffs with no integration story. Latent Space's earlier Cursor cloud-agents interview framed the shift well: cloud agents needed real computers, testing, demos, and remote control because the review bottleneck moved from "did it write code?" to "can I trust what happened while I was away?"

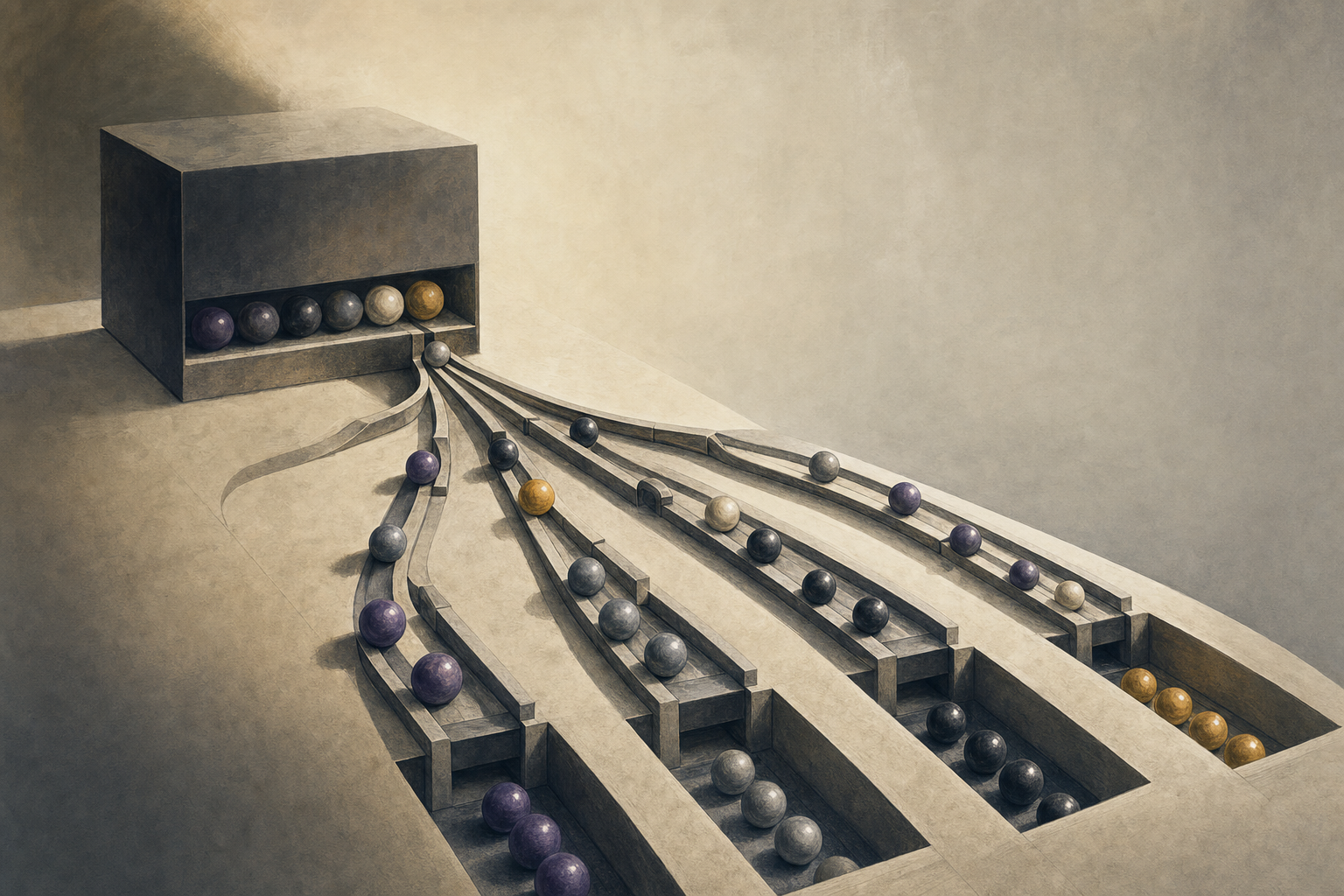

Why it matters: The structural change is that the agent product is becoming a scheduler. The model writes code, but the product decides which work can run concurrently, what evidence comes back, where review happens, and how changes are cut into mergeable units. That makes the interface more like a small operating system for software work than an editor with a chat panel. The important primitive is not "spawn more agents"; it is a dependency graph that separates independent work from work that must serialize. If Cursor can make that graph accurate, parallel agents raise throughput. If it cannot, they convert model latency into human integration debt.

Room for disagreement: The skeptical read is strong: parallel coding agents can still make the same project worse faster. The evidence that would settle this is not launch copy; it is merge-conflict rate, reverted-agent PRs, review time per accepted change, and whether the split-PR action actually reduces integration failures on large repos.

Flash-Lite Prices Routing

Google's quiet May 7 update to Gemini 3.1 Flash-Lite is easy to misread as another cheap-model SKU. The more important point is that the cheap model now has enough surface area to become the router.

Google's Gemini API changelog made gemini-3.1-flash-lite generally available and says the preview alias shuts down later this month. The model page gives it a 1,048,576-token input window, 65,536 output-token limit, multimodal input, function calling, structured outputs, file search, code execution, caching, flex inference, and configurable thinking. The pricing page lists standard paid pricing at $0.25 per million text/image/video input tokens and $1.50 per million output tokens, with batch and flex at half that.

The benchmark shape is the tell. Google's model card reports 363 output tokens per second, 72.0% on LiveCodeBench, 86.9% on GPQA Diamond, and 16.0% on Humanity's Last Exam, roughly in the same neighborhood as heavier "mini" or "fast" competitors on several evals. But it also reports weak 1M-context MRCR performance at 12.3%, below Gemini 2.5 Flash's 21.0%, and the model does not support computer use or the Live API. In plain English: this is not the model you want doing the whole job. It is the model you can afford to call constantly to decide what the job is.

Why it matters: The center of gravity in inference economics is moving from "which model is best?" to "which model should see this step?" Flash-Lite is designed for classification, extraction, translation, summarization, and lightweight agentic routing: cheap enough to sit in front of expensive models, capable enough to make structured decisions, and fast enough to avoid becoming the bottleneck. That changes how agent systems are measured. A bad router wastes money by escalating too much or silently destroys quality by escalating too little. The model-pick problem becomes an eval problem for the routing policy, not just a leaderboard problem for the worker model.

What to watch: The next useful benchmark will score route decisions: how often a cheap gatekeeper correctly sends work to Flash, Pro, Claude, GPT, local models, or no model at all, and what that does to cost per completed workflow.

The Contrarian Take

Everyone says: More parallel agents and cheaper models mean the automation curve is steepening again.

Here's why that's wrong, or at least incomplete: The obvious gain is speed, but the binding constraint is coordination. Cursor's release is interesting because it admits that parallel work needs ordering, snapshots, split PRs, and review state. Flash-Lite is interesting because it admits that not every step deserves a frontier model. The durable advantage will belong to systems that schedule work, route models, and verify outputs, not systems that merely add another agent button.

Under the Radar

- Claude Code exposes more harness policy - The 2.1.133 changelog adds

worktree.baseRef, passes active effort level into hooks, releases warm-spare workers under memory pressure, and fixes a credential-refresh race that could dead-end parallel sessions. Those are not flashy features. They are the control surfaces that keep multi-agent local work from breaking in ways the model cannot reason about. - OpenAI is library-fying the harness - OpenAI's Agents SDK docs now expose sandbox agents, orchestration, guardrails, observability, workflow evals, and voice-agent primitives, while the Codex developers plugin wires OpenAI Platform setup directly into Codex. The pattern is clear: vendors are competing not only on models, but on who owns the default scaffolding around file access, tools, approvals, and credentials.

Quick Takes

- Codex gets a platform setup plugin: The OpenAI Developers plugin lets Codex connect to the OpenAI Platform, create and store project API keys, and troubleshoot common API failures. The small signal is that API onboarding is moving inside the coding agent, which turns setup friction into an agent task rather than documentation reading. (Source)

- Realtime voice gets a reasoning dial:

gpt-realtime-2supports speech-to-speech interactions with configurable reasoning effort, stronger instruction following, and tool use, with text input at $4 per million tokens and text output at $24. Voice agents now have the same cost-latency tradeoff that text agents already face. (Source) - Gemini's agent schema is still moving: Google says the Gemini Interactions API will change request/response schema from

outputstosteps, with the new default on May 26 and legacy removal on June 8. The practical signal is that agent APIs are still protocol surfaces, not stable plumbing. (Source)

The Thread

Today's thread is scheduling. Cursor is scheduling tasks across agents. Gemini Flash-Lite is scheduling model spend across steps. Claude Code and OpenAI are exposing harness knobs because scheduling fails when credentials, worktrees, sandboxes, and tool state are hidden. The model still matters, but the higher-value layer is deciding what runs where, in what order, with what evidence attached. That is where agent reliability becomes an engineering discipline rather than a demo category.

Predictions

New predictions:

- I predict: By 2026-08-31, at least two major coding-agent products will publish or expose collision, merge-conflict, revert-rate, or review-time metrics for parallel-agent workflows. (Confidence: medium; Check by: 2026-08-31)

- I predict: By 2026-07-31, at least one public model-routing benchmark or vendor report will measure route accuracy and cost per completed workflow, not just standalone model scores. (Confidence: medium; Check by: 2026-07-31)

Coming Next Week

Next week, the useful deep dive is agent scheduling: how worktrees, sandboxes, model routers, and review queues are becoming the real runtime for coding agents.

May 8, 2026, 3:40 AM ET.

Tomorrow morning in your inbox.

Subscribe for free. 10-minute read, every weekday.