The Unit Changed

7 stories · ~7 min read

If You Only Read One Thing

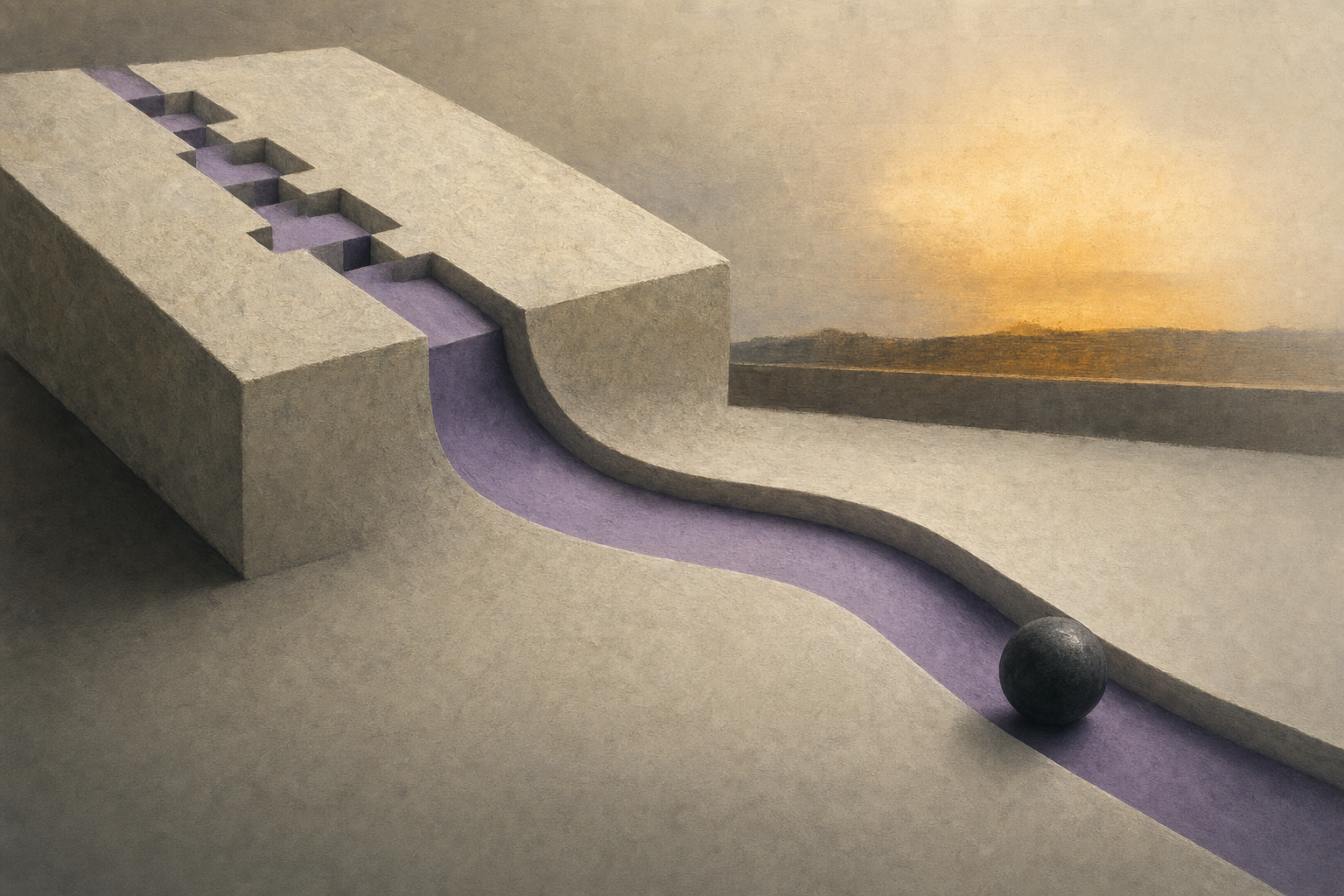

The quiet AI story is that progress is moving from bigger models to better units of work. Ai2's MolmoAct 2 turns robot control into open action reasoning, while Meta's tokenization paper says scaling laws should count bytes, not tokens. One story changes what models do; the other changes what training budgets measure. That is more durable than another leaderboard jump.

MolmoAct 2 Makes Actions Inspectable

The hardest part of robotics is not getting a model to describe the world. It is getting the model to turn a changing scene into a safe, timely movement.

Ai2's new MolmoAct 2 release is a major-lab attempt to make that action layer open enough to study. The Ai2 post says last year's MolmoAct was trained on 22 hours of curated in-house data, about 10.6K successful robot trajectories, plus Open X-Embodiment data. MolmoAct 2 replaces that thin base with a specialized embodied-reasoning vision-language backbone, about 3M additional spatial examples, an open action tokenizer, and a flow-matching action expert connected to the vision model through a key-value cache bridge. In plain English: the system tries to preserve explicit spatial reasoning while generating continuous robot movements, not just discrete text tokens.

The numbers matter because they move the story from demo to operating constraint. Ai2 reports a single action call of about 180 ms for the base model and 790 ms for MolmoAct 2-Think, compared with 6,700 ms for MolmoAct in LIBERO on one H100. It also released the Bimanual YAM dataset, with more than 720 hours of two-arm demonstrations, and reports 87.1% average success in real-world zero-shot Franka tests versus 48.4% for MolmoBot and 45.2% for pi0.5. Ai2 is still honest about the limit: training code is "coming soon."

Why it matters: Robotics foundation models need a shared action substrate the way language models needed shared tokenizers and benchmarks. A vision-language-action model, meaning a model that maps camera input and instructions into robot behavior, is only useful if other labs can inspect the data, adapt the policy, and reproduce the failure modes. MolmoAct 2 is interesting because it pushes three pieces into the open at once: reasoning backbone, action representation, and bimanual data. That does not make general-purpose robots solved. It changes the bottleneck from "can a lab show a robot video?" to "can the field compare action policies under common hardware, latency, and safety constraints?"

Room for disagreement: Ai2 is still reporting many of the headline results itself, and the repo says the complete code path is not here yet. The strongest confirmation would be independent robot-lab replications on unfamiliar hardware, especially tasks where camera occlusion and calibration errors dominate.

What to watch: The useful follow-up is whether MolmoAct 2 becomes a base checkpoint for other robot groups, not whether it wins one more benchmark. Adoption would show that open action reasoning is becoming infrastructure.

Tokenization Becomes Training Economics

Tokens are treated like the natural unit of language-model training. Meta's new paper is a useful reminder that they are an accounting convention.

In Compute Optimal Tokenization, Meta researchers study how token compression rate, measured as average bytes of text per token, changes scaling laws. The paper trains 988 latent-tokenized models from 50M to 7B parameters, varying compression beyond the 4.57 bytes per token produced by a common byte-pair tokenizer. The key result is blunt: in compute-optimal configurations, model size scales with data measured in bytes, not data measured in tokens. The paper also finds that the optimal compression rate differs from conventional byte-pair encoding and decreases as compute rises.

This sounds like a tokenizer footnote until you remember how the industry talks about training. Kaplan-style and Chinchilla-style scaling laws taught labs to reason about model size, data, and compute, but the usual shorthand treats tokens as if one token is a stable amount of information. It is not. A tokenizer is the compression layer that decides whether a word, fragment, symbol, or byte sequence counts as one model step. Change the compression scheme and "one trillion tokens" can mean a different quantity of text, a different context burden, and a different training budget.

Why it matters: Tokenization is becoming part of the cost model, not just preprocessing. If bytes are the more stable unit, then token counts are a lab-specific denominator that can distort comparisons across languages, model families, and context-window claims. A model that looks data-rich in tokens may be less data-rich in bytes; a tokenizer that reduces token count may lower attention cost while changing how the model allocates capacity to rare words, code, or non-English text. The structural implication is that training reports should stop treating token count as self-explanatory. The more honest unit is closer to "how many bytes of information did the model actually learn from, and at what compression rate?"

Room for disagreement: The study reaches 7B parameters, not frontier scale, and relies heavily on latent tokenization experiments. The result is still important because it attacks a measurement assumption that every frontier lab uses. If larger runs reverse the finding, labs should be able to show that directly.

What to watch: Model cards should begin reporting bytes, compression rate, and tokenizer family next to token counts. That would be the signal that tokenization has moved from implementation detail to scaling-law variable.

The Contrarian Take

Everyone says: Today's robotics and tokenization papers are separate technical stories: one about physical AI, the other about language-model training.

Here's why that's wrong, or at least incomplete: They are both unit-of-account stories. MolmoAct 2 says robot progress should be measured in action calls, bimanual demonstrations, latency, and real-world task success, not only visual reasoning scores. Compute Optimal Tokenization says language-model progress should be measured in bytes and compression rate, not only tokens. The common shift is away from model-size theater and toward the substrate that determines whether intelligence can be trained, compared, and deployed.

Under the Radar

-

Clinical agents fail inside the workflow — PhysicianBench evaluates agents on 100 long-horizon EHR tasks across 21 specialties, with an average of 27 tool calls and 670 execution-grounded checkpoints. GPT-5.5 leads at 46.3% pass@1, and the benchmark attributes about 52% of failed checkpoints to clinical reasoning. This is the medical-agent version of the same lesson: knowledge recall is not workflow competence.

-

Meta treats code release as a safety-eval decision — The Code World Model preparedness report says Meta evaluated CWM against its Frontier AI Framework before releasing the model as open weights. The interesting part is not the conclusion alone; it is that open-weight code models are now being routed through domain-specific capability and propensity checks before release.

Quick Takes

-

Multi-turn RL is getting an exploration budget. T2PO, or Token- and Turn-level Policy Optimization, tries to stabilize agent reinforcement learning by detecting low-information tokens and turns, then intervening or resampling instead of spending rollouts blindly. The useful idea is that agent training collapse may be partly a bad-exploration problem, not only a reward-design problem. (Source)

-

Context skills are becoming self-play artifacts. Ctx2Skill uses Challenger, Reasoner, and Judge agents to derive task-specific natural-language skills from long contexts without human annotation. The paper is too early for a deep slot, but the direction matters: prompt context is being treated as a thing agents can mine, test, and revise, not just read once. (Source)

-

Agent medicine is moving from answers to orders. PhysicianBench's task design uses FHIR-grounded actions, chart retrieval, medication decisions, treatment planning, and documentation. That makes it a more serious test than static medical QA because wrong answers become wrong workflow steps. The weak open-model scores, topping out below 20% pass@1, are the warning label. (Source)

The Thread

The throughline is measurement discipline. Today's strongest technical signals are not "model X is smarter." They are "the unit was wrong." Robots need action-level accounting. Training laws need byte-level accounting. Clinical agents need workflow-level accounting. Code-model releases need risk-domain accounting. AI progress is becoming less about bigger abstractions and more about choosing the measurement unit that exposes the real constraint.

Predictions

New predictions:

- I predict: By 2026-08-31, at least two major model or training reports will publish bytes, bytes-per-token, or tokenizer compression rate alongside headline token counts. (Confidence: medium; Check by: 2026-08-31)

Generated May 5, 2026 at 12:48 PM ET.

Tomorrow morning in your inbox.

Subscribe for free. 10-minute read, every weekday.