RecursiveMAS and ProDa Move Intelligence Below the Prompt

8 stories · ~7 min read

If You Only Read One Thing

The most important AI work today is happening below the visible prompt. RecursiveMAS moves agent collaboration into latent state instead of text chat, while ProDa treats training data like debuggable source code. Both argue that the bottleneck is no longer what a model says. It is what the system can pass, test, and repair.

RecursiveMAS Turns Agent Collaboration Into a Hidden-State Problem

Most multi-agent systems still look like meetings: one agent writes a message, another reads it, a critic comments, and the system pays for every word. That design is easy to inspect and expensive to scale.

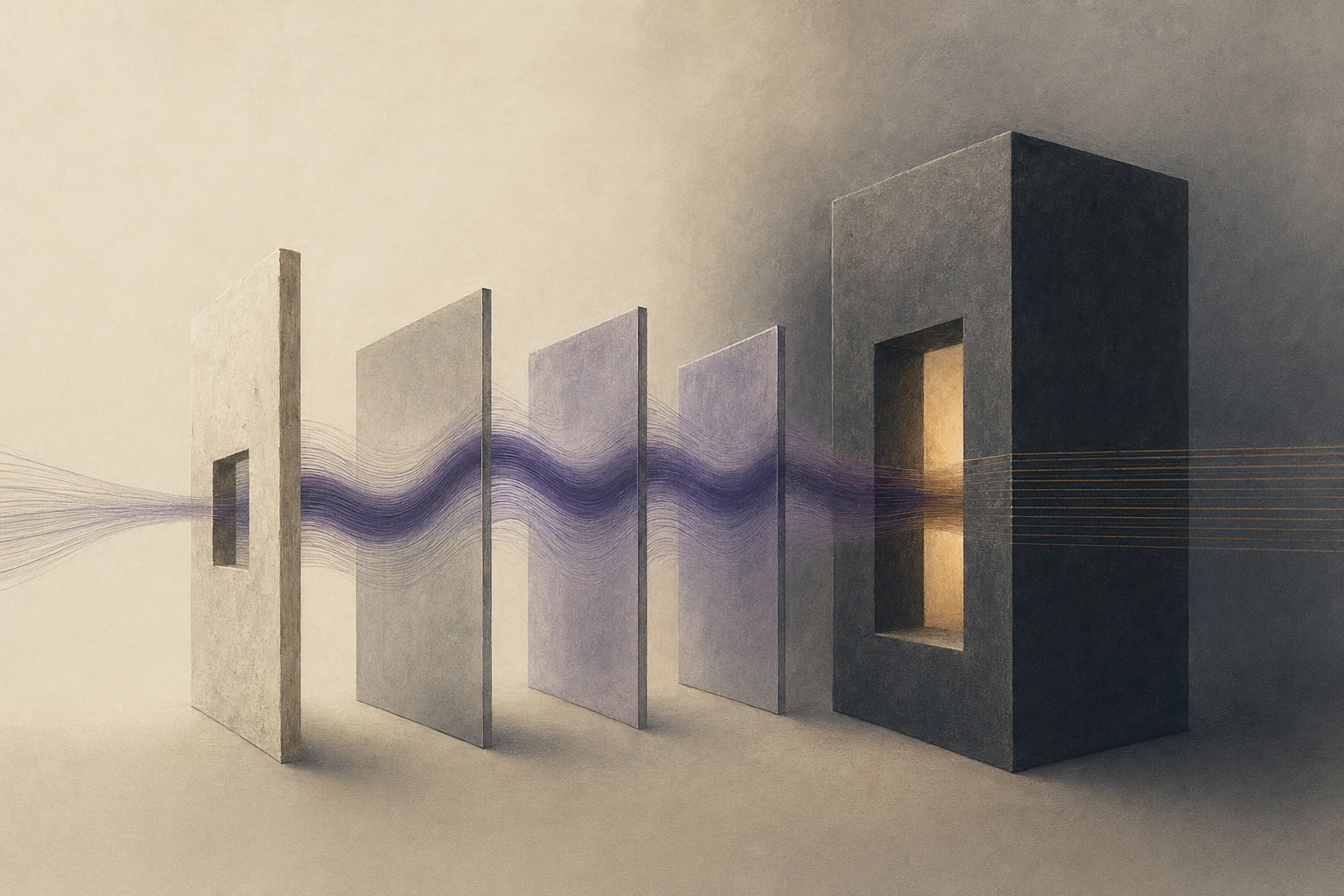

RecursiveMAS, a UIUC/Stanford/NVIDIA/MIT-linked project, attacks the communication interface itself. The system freezes the base language models and trains only about 13 million parameters in small RecursiveLink modules. Instead of forcing agents to exchange text after every step, it passes latent state, the model's internal vector representation, across agents and recursion rounds. Think of it as replacing a transcript with a shared working memory: intermediate agents can coordinate in continuous space, and only the final round has to decode back into language.

Why it matters: this reframes multi-agent systems from prompt orchestration to systems architecture. The old assumption was that collaboration meant more specialized agents and better prompts. RecursiveMAS says the expensive part is the translation layer: every intermediate text message compresses internal state into words, then asks the next model to reconstruct intent from those words. The reported numbers are strong enough to matter: across four collaboration patterns and nine benchmarks, RecursiveMAS reports an 8.3% average accuracy gain, 1.2x to 2.4x end-to-end speedup, and 34.6% to 75.6% token reduction over text-mediated multi-agent baselines. The system also beats single-agent fine-tuning and text-recursive baselines on examples including MATH500, AIME 2026, GPQA-Diamond, LiveCodeBench, and MedQA.

The structural point is that agents may not need more conversation. They may need less serialization. If latent links hold up outside the authors' benchmarks, the agent stack starts to look less like a Slack channel of models and more like a trainable computation graph, where credit assignment can flow through the whole system. That would pull value away from brittle framework glue and toward the interfaces that preserve state between model calls.

Room for disagreement: the paper is still an early research result, not independent production evidence. The GitHub implementation is new, the evaluated systems use relatively small specialist models, and latent coordination is harder to inspect than text. If the method cannot be audited or debugged cleanly, some of the efficiency gain will reappear as operational risk.

What to watch: the key variable is whether an independent agent framework implements latent-state communication without requiring full model access. If it only works when researchers can touch hidden states directly, adoption will stay narrow.

ProDa Makes Training Data Debuggable

Fine-tuning on domain corpora is usually a blunt instrument. When the model fails, teams add more documents, more synthetic examples, or more instructions, then hope the next run learned the missing concept.

Programming with Data, released by the OpenRaiser team, proposes a sharper loop. ProDa extracts a structured knowledge representation from raw corpora and uses that same structure to generate training data and evaluation questions. Its project page describes the analogy directly: training data is source code, training is compilation, benchmarking is unit testing, and failure-driven data repair is debugging. The released ProDalib suite spans 16 disciplines with 48,000 curated chunks, 227,869 atomic concepts, 186,784 relational statements, 43,953 reasoning chains, 16,000 benchmark items, and 160,000 synthesized training samples.

Why it matters: ProDa is interesting because it makes synthetic data accountable. The weak version of synthetic training says "generate more examples." The stronger version asks which concept or reasoning chain caused a failure, patches that part of the data specification, and reruns the test. That is a different control loop. On the authors' reported ProDa-16 results, Llama-3.1-8B moves from 30.35% to 63.02% after the repair pass, Qwen-2.5-3B moves from 50.42% to 67.87%, and Qwen-2.5-14B moves from 70.12% to 76.30%. The gains shrink on stronger bases, which is exactly what one would expect if the method is closing identifiable data gaps rather than sprinkling magic over every model.

The broader shift is from data volume to data diagnostics. That matters because model improvement is increasingly bottlenecked by knowing why an example works, not merely owning enough examples. ProDa's real contribution is not the software analogy. It is the shared specification: because the benchmark and training samples come from the same knowledge graph, failures can be traced back to missing concepts, broken relations, or weak reasoning chains. That turns data curation from taste into a feedback system.

Room for disagreement: the benchmark is partly born from the same structure used to build the training data, so it may reward the loop's own worldview. The hard evidence would be transfer: repaired corpora should improve unrelated external exams, human-written domain tasks, or real downstream workflows without overfitting to ProDa-16.

What to watch: whether domain fine-tuning papers start reporting "data patches" the way software teams report bug fixes: named failure, local repair, regression test, and external validation.

The Contrarian Take

Everyone says: agents and domain models need stronger frontier models underneath them.

Here's why that's wrong, or at least incomplete: today's best evidence points to interfaces, not just intelligence. RecursiveMAS improves agent systems by changing how intermediate state moves. ProDa improves domain learning by changing how failures map back to data. DV-World and AutoResearchBench, below, show the same pattern from the evaluator side: the gap is not that models cannot produce fluent artifacts. The gap is that systems still lack the right surfaces for state, semantics, and verification.

Under the Radar

- Salesforce publishes the compound-AI serving problem — A production deployment study for Agentforce and ApexGuru reports more than 50% lower P95 tail latency, up to 3.9x throughput improvement, and 30% to 40% cost savings versus static deployments. The useful part is the failure taxonomy: multi-model fan-out, cascading cold starts, and heterogeneous scaling are agent-specific infrastructure problems, not generic LLM serving problems.

- Scientific agents keep failing silently — In Plausible but Wrong, CMBAgent improves about 6x on astrophysical tasks when given domain context, but the dominant failure mode is syntactically valid code producing plausible wrong results. That aligns with last week's evidence-ignored AI-scientist story: scientific agents fail most dangerously when nothing looks broken.

Quick Takes

- DV-World tests data-visualization agents in software, not sandboxes. The 260-task benchmark spans spreadsheet-native charting, reference-visual adaptation, and ambiguity handling with a user simulator. Leading models still score below 50% overall, which says enterprise "chart agent" demos are ahead of the measured ability to repair, transfer, and clarify visual work. (Source)

- AutoResearchBench makes Deep Research look much less solved. The benchmark covers 1,000 questions across 195 CS topics and over 50,000 papers. Even strong systems that perform well on general browsing benchmarks reach only 9.39% Deep Research accuracy and 9.31% Wide Research IoU in the authors' headline setting. (Source)

- A four-kilobyte semantic layer beats model shopping for analytics. A paired text-to-SQL benchmark gives Claude Opus 4.7, Claude Sonnet 4.6, and GPT-5.4 either only a database schema or the schema plus a 4 KB markdown description of business semantics. The document adds 17 to 23 accuracy points; model choice within tier becomes statistically indistinguishable. (Source)

- Pruning may help test-time scaling instead of hurting it. Doing More With Less finds that unstructured pruning can outperform structured pruning and sometimes even the unpruned model on reasoning benchmarks for s1.1-7B and Qwen3-8B. That cuts against the assumption that reasoning models must keep every weight to benefit from extra test-time compute. (Source)

The Thread

The common thread is that AI progress is moving from the model's public answer to the system's hidden substrate. RecursiveMAS changes the substrate between agents. ProDa changes the substrate beneath training data. The semantic-layer result changes the substrate beneath database schemas. Salesforce's compound-serving study changes the substrate under agent deployment. This is the less flashy frontier: not a larger model, but fewer lossy translations between what the system knows, what it tests, and what it repairs.

Predictions

New predictions:

- I predict: at least one open agent framework or research implementation will expose a RecursiveMAS-style latent-state communication path, or a close substitute, in a public release by 2026-08-29. (Confidence: medium; Check by: 2026-08-29)

- I predict: by 2026-09-30, at least two domain-model training papers will report failure-traced data patching, with concept-level or reasoning-chain repair, instead of only adding larger undifferentiated synthetic corpora. (Confidence: medium; Check by: 2026-09-30)

Generated 2026-04-29 03:36 ET

Tomorrow morning in your inbox.

Subscribe for free. 10-minute read, every weekday.